Sometimes people say there’s an 80:20 rule for knowledge.

That dramatically understates the reality.

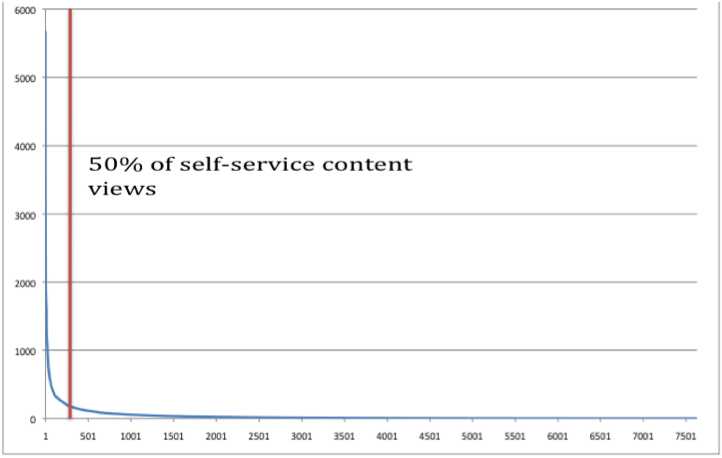

The graph above shows a pretty typical pattern of knowledge reuse. Out of tens of thousands of knowledgebase articles, only about 7500 were viewed even once in a given month. Of these 7500, only about 300 articles represented 50% of the views. That’s about 4% of the active knowledgebase, and only 1% of the total knowledgebase.

These statistics make it pretty clear: a one-size-fits-all approach to knowledge simply doesn’t make sense.

For your big hitters—the “short head,” invest. Fix the product. Do multimedia how-tos. Create process wizards. Build smart content that can implement fixes for the customer. At the very least, make the knowledge as accurate and clear as you can. This content is used hundreds or thousands of times a month—the ROI is there.

For the long tail, ruthless efficiency is the name of the game. This is where KCS and its idea of “capture in the workflow” is so important. Long tail content is valuable, but not valuable enough to invest very much. So making it so customer-facing staff can capture knowledge with little or no impact to case closing time is crucial.

At first, you don’t know what’s going to be short head content. So create it efficiently in the workflow, and then let usage data, like the graph above, tell you where to invest.

The importance of this graph can’t be overstated! However, there are some other techniques that can be used to effectively increase the “footprint” of your “long tail” KB articles. Few articles stand alone – they are related in some way to others or other resources on your site. By making those connections explicit you give users yet-another path to discovery.

For example, an article on our site about a particular way to configure a VPN will be connected to dozens of other similar-yet-different articles. We create “master” articles which serve as an index — in some cases also linked to troubleshooting or process wizards. These master articles are often boosted in search results to lead customers to an overall “topic” vs individual search results. In this way customers get a much broader introduction to what’s available. These topic collections also provide a framework (outside of normal KCS flows) to focus attention on review/update cycles.

Keith –

Excellent comment, and I think you’re absolutely right. A nuance that the original post skipped over is that it’s not just long tail (A loop) content that turns into short head (B loop) content. As you point out, there may be a collection of long-tail issues that are related to a common customer issue, and for those, what you call “master” articles, created outside of the workflow, become hugely valuable short head content.

In this, modern versions of KCS have been very influenced by Livia Wilson of Outsights and their concept of normalization and resolution flows.

One half-twist on this concept, though, is how you come to the list of the “master” articles, and where they link. To the extent that I understand normalization, the emphasis is on the “smart guys in a room” approach, whereas KCS would suggest that the long tail articles come first, and based on a thoughtful review of what’s actually happening in the caseload, the “master” topics bubble up from the foam of long-tail knowledge reuse.

That’s a pretty subtle difference in emphasis, though. Fundamentally, I agree: master articles are generally one of the most important elements of the short head; they’re great “best bets” or directed documents; and they serve also as a “framework to focus [SME] attention.”

Thanks for the comment! dbk

Coming from a technology perspective, I think this long tail effect is being encouraged by very poor search technology. When the findability of relevant articles is low, users either give up or find only a small portion of what may be of interest in their particular case. Being able measure how long users spend viewing an article can give you additional insight into if that single view in the month delivered “the answer” or “what has this got to do with my issue?”

Until we can improve search systems, I think that periodically reviewing search terms and the articles they deliver to the users can be valuable in helping understand how real users are using the system and if it is delivering the relevant articles that you know are available. I encounter people all the time that have figured out the quirks of their own search technology and thus believe that customers are having the same success as them. This is just not true.