A cornerstone KCS quality measure is the Article Quality Index (AQI.) We calculate the AQI by sampling a handful of articles captured by licensed knowledge developers and checking them against an article quality checklist. If you sample five of my articles, and there are ten criteria on the checklist, you’ve checked 50 items. If I missed a total of two items across those 50, then my score is 100% – (2 / 50) = 96%. Not bad! We like to see individuals’ AQIs above 90%, or put more negatively, we like to see an error rate of less than 10%.

Of course, capturing articles is just one piece of KCS. The AQI doesn’t tell us if people are linking well, or capturing when they should be, or improving content. Perhaps it’s time to expand our vision and create a Resolution Quality Index (RQI.)

Instead of just sampling articles, let’s sample cases. In specific, let’s pick three different types of cases:

- Cases with a link to existing knowledge

- Cases with a link to newly-captured knowledge

- Cases with no link to knowledge

For cases with a link to existing knowledge, we’ll look at three things. First, link accuracy: are the linked article(s) relevant, and is there one to Solve Loop content that describes the customer’s specific resolution? (Just linking to the user manual doesn’t count.) Second, improvement loss: was there an opportunity to update the article that should have been taken, but wasn’t? It’s not necessary to go on a witch hunt here, but look for giveaways like customer emails that say, “in your case, you have to download this file before performing step five,” or for information in the case that’s different from information in the article’s environment. Finally, link timeliness: if you can tell in your case tracking system, was searching and linking happening at the early on the case, or only after the customer’s issue had already been resolved? (HT to Devra Struzenberg for this idea.)

For cases with a link to newly-captured knowledge, we’ll apply our existing article quality checklist. We’ll also check capture timeliness: as with link timeliness, if our technology supports it, we’ll look for when the article content was captured, and how much it was edited post-call. Some post-call editing is inevitable, but Capture in the Workflow tells us that most of the information should be in place by the time the case is closed.

For cases with no link to knowledge, the question is, should there have been a link? If the answer is no—for example, if we were just re-issuing a license key or if the customer never responded to a request for information—that’s legitimate nonparticipation. But, if there was an article available that wasn’t linked, that’s reuse loss, and if one should have been created, that’s capture loss.

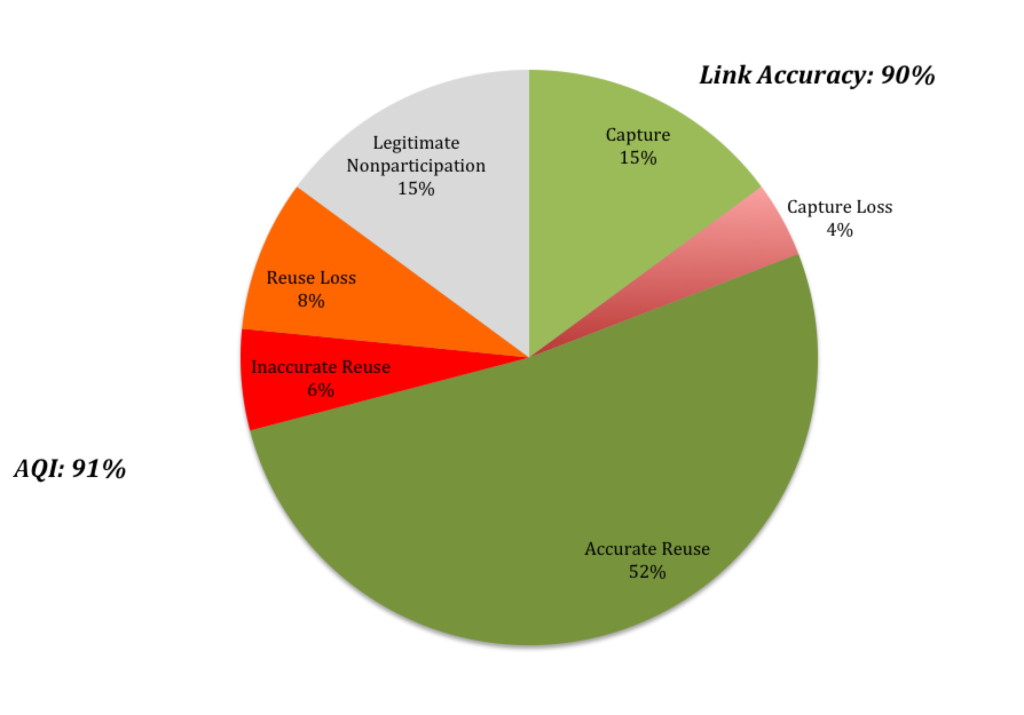

Having finished this sampling process, you’ll have an overall view of how well people are following the KCS problem resolution process. To help visualize this, look at this pie chart of cases:

If these ideas sound familiar, they should: this is the kind of analysis provided by the New vs. Known Study described in the KCS Practices Guide. What’s new, though, is using this like the AQI, as a coaching tool. Rather than just doing it once every six months, coaches or knowledge domain experts can sample a few of each kind of case to see if there’s a pattern of capture loss, reuse loss, or inaccurate reuse, and can provide feedback accordingly.

This does take more time, and a bit more subject expertise, than the traditional AQI process. But I think the coaching opportunities would be more than worth it.

ps – Thanks to my colleagues working on the KCS Practices Guide v5.3 update for a vigorous and informative conversation on this topic. You inspired this post!

Thanks David! This is an excellent write up and measurements such as this truly help promote a ‘we are all knowledge workers’ culture.

Thanks David! This is an excellent write up and measurements such as this truly help promote a ‘we are all knowledge workers’ culture.

Nice work David! It is critical to establish an AQI baseline and ongoing sampling at the team and individual level.

Great stuff – this proposition of RQI is hot!

Looking at the three case types I wonder: When we’re talking about ‘knowledge’ it seems to me we mean the one and only KB, of which the customer can see the parts we decide.

Where does other knowledge fit in? Engineering TOIs, myriad intranet sites, and public, external references all come to mind. When the support engineer links those but not a doc in the KB itself, is that a straight capture loss? Or is there partial credit?

Roger –

That’s a great question. You’re right, the tone of this post makes it sound like I’m talking about THE knowledge base, but there are other resources to which the person working the case can link. Recent updates to the KCS Practices Guide specify that you can link to information that’s in a maintained repository, is specific (i.e., not the manual), and is accessible and in the context of the audience using the content. (I’m paraphrasing; the details are in Reuse Technique 4: Linking, subhead “Linking an Incident to Non-KCS Content”).

So the bottom line is that those links would count as reuse, in the green part of the pie chart.

Thanks for the careful read and good question!

Great stuff – this proposition of RQI is hot!

Looking at the three case types I wonder: When we’re talking about ‘knowledge’ it seems to me we mean the one and only KB, of which the customer can see the parts we decide.

Where does other knowledge fit in? Engineering TOIs, myriad intranet sites, and public, external references all come to mind. When the support engineer links those but not a doc in the KB itself, is that a straight capture loss? Or is there partial credit?

Roger –

That’s a great question. You’re right, the tone of this post makes it sound like I’m talking about THE knowledge base, but there are other resources to which the person working the case can link. Recent updates to the KCS Practices Guide specify that you can link to information that’s in a maintained repository, is specific (i.e., not the manual), and is accessible and in the context of the audience using the content. (I’m paraphrasing; the details are in Reuse Technique 4: Linking, subhead “Linking an Incident to Non-KCS Content”).

So the bottom line is that those links would count as reuse, in the green part of the pie chart.

Thanks for the careful read and good question!

Great work David! I really like the idea of RQI and will begin looking for ways to incorporate it into our KCS program. I did have one question about the pie chart example above that I hope you can clarify.

You noted that 15% of cases fell under Legitimate Nonparticipation. Could we then not remove those cases from the overall calculation since there was no knowledge activity required (billing question, carrier redirect, etc)? You could then calculate total Inaccurate Reuse, Reuse Loss and Capture Loss as impacting items (18%) and Accurate Reuse and Capture as non-impacting items (67%) and get an RQI of 79%.

This would allow some wiggle room for those groups that may take a larger portion of non-tech related calls where no knowledge is required.

Steve –

Thanks for the vote of confidence (from someone who lives in the real world with this kind of stuff.) I’m looking forward to hearing about your experiences.

You’re right, it’s an excellent practice to take obviously-not-knowledge-related cases out of the mix altogether, if you have reliable-enough case categorization that you can safely eliminate (say) billing questions or carrier redirects. This is true for Participation Rate, too. Good catch.

d

Great work David! I really like the idea of RQI and will begin looking for ways to incorporate it into our KCS program. I did have one question about the pie chart example above that I hope you can clarify.

You noted that 15% of cases fell under Legitimate Nonparticipation. Could we then not remove those cases from the overall calculation since there was no knowledge activity required (billing question, carrier redirect, etc)? You could then calculate total Inaccurate Reuse, Reuse Loss and Capture Loss as impacting items (18%) and Accurate Reuse and Capture as non-impacting items (67%) and get an RQI of 79%.

This would allow some wiggle room for those groups that may take a larger portion of non-tech related calls where no knowledge is required.

Steve –

Thanks for the vote of confidence (from someone who lives in the real world with this kind of stuff.) I’m looking forward to hearing about your experiences.

You’re right, it’s an excellent practice to take obviously-not-knowledge-related cases out of the mix altogether, if you have reliable-enough case categorization that you can safely eliminate (say) billing questions or carrier redirects. This is true for Participation Rate, too. Good catch.

d

Great stuff!

I have been having similar thoughts, and referring to these activities as “Content Consciousness.” Instead of clocking-in, tripping through day, and clocking-out, a knowledge-worker is engaged in his/her work. The level of “Content Consciousness” depends on numerous factors such as aptitude of the person, training, coaching, communication, reporting, leadership, and personal mood.

From a leadership standpoint, we want our people to have a highly accurate reuse and capture rate. However, most leaders do not emphasize this. I would imagine in most organizations the emphasis is still on Handle Times, Resolution Rates, and Customer Sat.

Handle Times and Resolution Rates are, in essences, a by-product of the RQI or “Content Consciousness.” Similarly, Customer Sat has levels of Consciousness, and Customer Satisfaction is paramount to a leader’s communication, as well as the organizations training, coaching, reporting and recognition.

Both, CSAT and RQI (Content Consciousness) are affected by the individual’s disposition. Struggling to get your 6 year old child to school, hay fever, winning $500 on a lottery ticket, or getting a new puppy, all affect our moods and are reflected in CSAT … and the RQI. Happy employees work better. Hey Leaders, get to work!

“Content Consciousness” — I love it!